This is the multi-page printable view of this section. Click here to print.

AI

1 - Kiali Chatbot

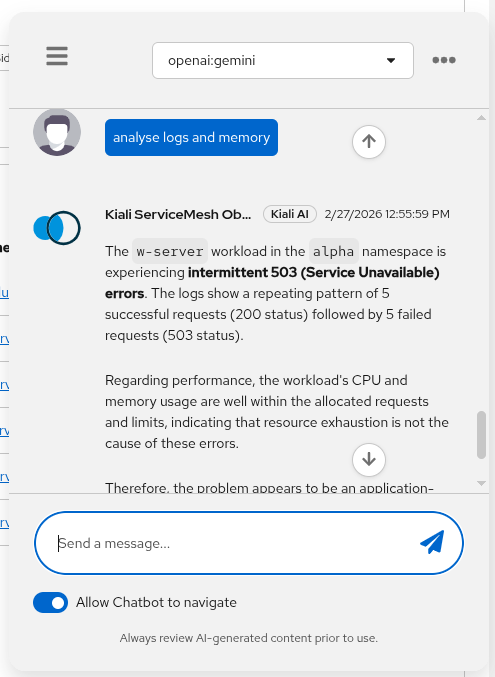

Kiali Chatbot is Kiali’s built-in AI assistant in the Kiali UI. It lets you ask questions about your service mesh and get answers backed by live data from Kiali and its configured backends (Prometheus, tracing, Kubernetes, etc.).

It does not require an external MCP server. Kiali includes its own set of MCP-style tools internally, so the AI can call them without depending on a separate MCP deployment.

Status

The Kiali chatbot was first released in Kiali version 2.22 and it is in Dev preview.

How does it work

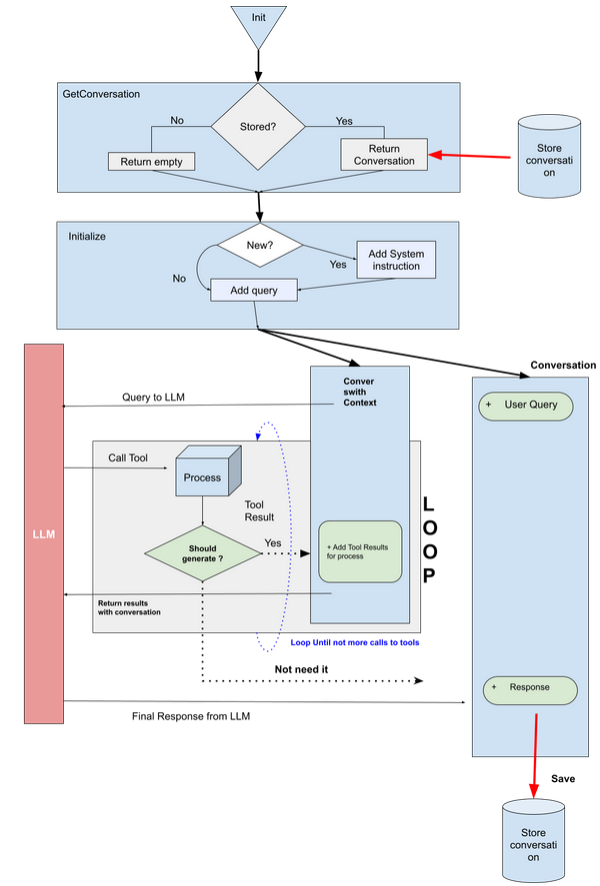

At a high level:

- The Kiali UI sends your chat request (prompt + context + selected model) to the Kiali backend.

- Kiali selects the configured provider/model from

chat_ai. - The provider calls the LLM with a set of internal MCP tools (defined in Kiali under

kiali/ai/mcp). - The LLM may request tool calls (e.g. mesh graph, traces, resource details, workload logs, Istio config operations).

- Kiali executes those tool calls against Kiali/Kubernetes/Prometheus/tracing backends and returns the final answer, including optional UI navigation actions and documentation citations.

For configuration keys (enable/disable, providers/models, store), see the chat_ai section in the Kiali CR spec.

Tool schemas (inputs/outputs)

Kiali Chatbot uses internal tools with defined input schemas and structured outputs.

Configuring the Kiali Chatbot

The Kiali Chatbot is disabled by default. To enable it, set chat_ai.enabled: true.

When enabled, you will see the chatbot icon in the Kiali UI:

![]()

You must also configure at least one provider and model (including an API key), and pick a default provider/model.

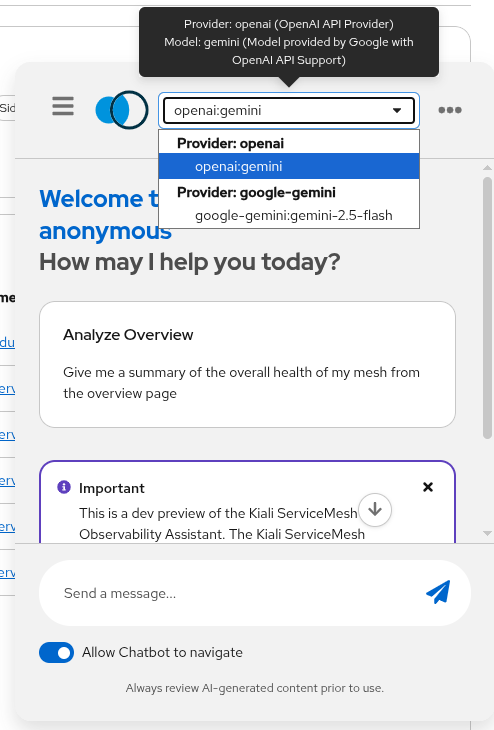

Switching model providers

Kiali Chatbot providers and models are configured in chat_ai:

- Providers: OpenAI-compatible (

type: openai) and Google (type: google). - Models are selected by name (per-provider) and can be enabled/disabled.

- API keys can be set inline (not recommended) or via

secret:<secret-name>:<key-in-secret>.

Example configuration (showing an OpenAI-compatible provider using Gemini via OpenAI endpoint):

chat_ai:

enabled: true

default_provider: "openai"

providers:

- name: "openai"

enabled: true

description: "OpenAI API Provider"

type: "openai"

config: "default"

default_model: "gemini"

models:

- name: "gemini"

enabled: true

model: "gemini-2.5-pro"

description: "Model provided by Google with OpenAI API Support"

endpoint: "https://generativelanguage.googleapis.com/v1beta/openai"

key: "secret:my-key-secret:openai-gemini"

You can also select the configured models and providers in the chatbot window:

What you can ask

Examples of tasks that work well:

- Mesh/namespace topology and summaries (graph, status)

- Basic observability questions (metrics, traces)

- Troubleshooting workflows (get logs for a workload, identify failing namespaces)

Example prompts

- “Show me the mesh graph for namespace

bookinfo.” - “Which workloads in

istio-systemlook unhealthy and why?” - “Get traces for service

productpageinbookinfofor the last 30m.”

Next step

If you want to use an AI assistant outside the Kiali UI (for example, in an IDE), see Kiali MCP.

2 - Kiali Chatbot tools (schemas)

Kiali Chatbot uses internal MCP-style tools (implemented inside Kiali) to fetch live data and perform safe actions. These are not external MCP server tools.

The tool input schemas are defined in Kiali under kiali/ai/mcp/tools/*.yaml. The tool outputs are JSON structures returned by the Kiali backend and consumed by the model and/or UI.

Tool list

get_action_ui: returns UI navigation actions (buttons/links).get_citations: returns documentation links relevant to the user query.get_mesh_graph: returns mesh health/topology summaries (and supporting raw payloads).get_resource_detail: returns service/workload details or lists (same payload shapes as existing Kiali APIs).get_pod_performance: returns usage vs requests/limits summary (CPU/memory).get_traces: returns a compact trace summary (bottlenecks/errors).get_logs: returns workload/pod logs with optional filtering.manage_istio_config: list/get/create/patch/delete Istio objects (with a confirmation gate for sensitive actions).

3 - Kiali MCP

Kiali MCP is an integration that allows MCP-capable AI assistants to query (and optionally manage) Kiali-related data by calling tools exposed by an MCP server.

The implementation is provided as part of the Kubernetes MCP Server upstream and also for Openshift MCP server. It exposes a kiali toolset (see upstream guide: docs/KIALI.md).

Prerequisites

- A reachable Kiali endpoint (Route/Ingress/Service URL).

- Kubernetes credentials available to the MCP server (kubeconfig or in-cluster config).

Enable the kiali toolset

Create a TOML config file and enable kiali in toolsets.

toolsets = ["core", "kiali"]

[toolset_configs.kiali]

url = "https://kiali.example" # Endpoint/route to reach the Kiali console

# insecure = true # optional: allow insecure TLS (not recommended in production)

# certificate_authority = "/path/to/ca.crt" # CA bundle for Kiali's TLS cert

Notes:

- If

urlishttps://andinsecure = false, you must providecertificate_authority. - Authentication to Kiali is performed using the server’s Kubernetes credentials (it obtains/uses a bearer token for Kiali calls).

Connect from an MCP client

How you wire this into a specific client depends on the client, but the core idea is the same: start the MCP server with your kubeconfig and your TOML config.

Example (conceptual) command:

kubernetes-mcp-server --config /path/to/config.toml --read-only

Once connected, your assistant can use the Kiali tools (for example: mesh graph, metrics, traces, workload logs) to power a chatbot-like experience outside the Kiali UI (for example, in an IDE).